My recent blog posts have focused on citizen scientists and the opportunities available to citizen science wannabees. While I love this topic, it seems like a good time to return to another favorite subject – astro imaging. I am very pleased and excited to share with you an email interview I just did with Neil Fleming.

My recent blog posts have focused on citizen scientists and the opportunities available to citizen science wannabees. While I love this topic, it seems like a good time to return to another favorite subject – astro imaging. I am very pleased and excited to share with you an email interview I just did with Neil Fleming.

Neil Fleming (image at left) is a wonderful astrophotographer as well as a very nice person. Neil has presented at the Midwest Astro Imaging Conference (MWAIC), the Advanced Imaging Conference (AIC) and the NorthEast Astro Imaging Conference (NEAIC). His images have been shown in Sky and Telescope and Astronomy magazines and on the Astronomy Picture of the Day (APOD) web site. Neil's web site is Fleming Astrophotography.

Included below is my email interview with Neil (images supplied by Neil):

1. You currently specialize in narrowband imaging. What advantages does narrowband imaging have for a person living near a large light polluted city like yourself (Boston)?

With RGB filters, the wavelengths captured are across the entire visible spectrum…so this picks up a lot of light pollution from the surrounding city. This is usually most visible as green and magenta gradients in our images:

You *can* work this problem in Photoshop, but it takes a long time and it is not easy. In addition to having gradients, there is greatly reduced contrast between your object and the skyglow, which means you have to take a lot of images to get anything approaching the quality you'd get from a dark sky location.

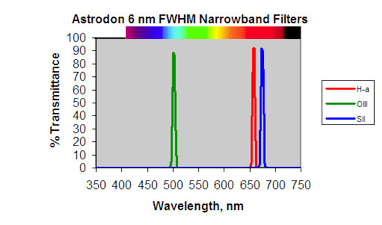

Narrowband imaging gives results that are much better; light pollution has a much reduced effect. This is because narrowband filters let through only the tiniest bandpass of light, the light that is associated with emission lines in nebulae. (Nebulae actually glow like a neon sign, albeit much more faintly <g>.) These filters typically let in a range of the spectrum only 3 to 13 nm in width, depending on the vendor:

- Hydrogen-alpha (Ha) emission wavelength is 656.3 nm (deep red)

- Doubly oxidized oxygen (OIII) has its main emission line at 500.7 nm (teal, or in the blue-green crossover)

- Sulfur (SII) emits at 672 nm (even deeper red)

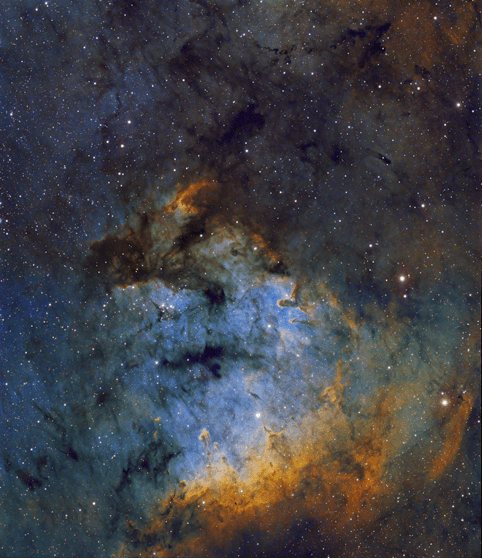

With the main sources of light pollution blocked by these filters, and with the increased contrast, you can get some very good images from a light polluted location, like this one taken in the Boston area:

2. What advice would you have for a person currently doing RGB processing who wants to try narrowband imaging?

The biggest difference is the length of sub-exposures (subs) you need to take. In Boston, I took 2-5 minute subs for LRGB imaging. For narrowband, you should go at least 30 minutes per sub. This means that you have to use a good mount, and your autoguiding has to be up to par. You also need lots of patience to gather 10+ subs per filter. (That allows for effective removal of problems like satellite and airplane trails.)

3. What about people with no imaging background who would like to learn imaging? Can they just jump into narrowband imaging or should they work on RGB processing first?

It's probably easier to start with RGB, because:

- It is easier to collect a bunch of RGB subs than it is to get the same quantity of those 30-minute narrowband subs.

- You more or less know what the color of an object should look like; you have a benchmark to compare your image to. Narrowband is a lot more free-form in terms of color and most of us prefer to alter the standard colors a bit. So, narrowband is a bit harder to jump right into.

4. Everyone likes to talk equipment. What equipment (including filters) and software do you use?

I started out with a TAK FSQ-106N on a TAK NJP mount, which worked really well for me. I use the Astrodon 6nm narrowband filters, and his GenII LRGB filters. His current 3nm and 5nm narrowband versions are even better. Now, I have access to a couple of setups in New Mexico; a TAK TOA-150/Apogee U-16M and a TMB 203 F/7 with an SBIG STL-6303E, each on a Software Bisque Paramount ME.

For software, I use CCDStack2 and the CCDInspector plugin for initial processing, then Photoshop CS2 for the final work. I have a few Photoshop plugins that I use too; Noel Carboni's Photoshop Actions, Russ Croman's Gradient Xterminator, NeatImage, FocusMagic, and Genuine Fractals. I also use eXcalibrator for image color balancing.

5. Narrowband imaging seems to benefit from long subs and the acquisition of many hours worth of usable data. Would it be fair to say that the imagers choice of mount is even more important when doing narrowband imaging?

Definitely! The most difficult part of imaging is getting your setup to behave well during autoguiding. The shorter RGB images are easier to acquire than the 30-minute narrowband images. In fact, for the first few months of my entry into this hobby I didn't even autoguide! I just shot 30-second to 1-minute images and played with those. With images that short, autoguiding was not necessary. Most of your astro-imaging budget needs to go into a good mount. You'll also need either a guidescope setup or some sort of off-axis guider like a MonsterMOAG if differential flexure becomes a problem.

6. What factors should be considered when choosing between a wider bandpass filter and a narrower bandpass filter? Any recommendations?

In general, other things being equal, the narrower the bandpass of the filter the better. The overall contrast of your image is better with a narrow filter bandpass. Unfortunately, the narrowest commercially available filters are not cheap! If you have to compromise, go with an OIII filter that is narrow as possible before moving to the same specification for your Ha and SII filters. Ha and SII emissions are in the deep red part of the spectrum, and are not as bothered by light pollution as OIII. (OIII is in the blue-green crossover, where there is much more light pollution.)

A good setup would be a 3nm OIII, with 5nm-7nm Ha and SII filters.

You can also start with just an Ha filter. The results are terrific, even in monochrome. Here is another Boston-based example:

7. Can you summarize the major differences between processing a narrowband image vs an RGB image?

When producing your initial monochrome channel masters, there is not much difference between producing your red, green, and blue masters and doing your SII, Ha, and OIII masters. The best practices that apply to RGB imaging also apply to narrowband.

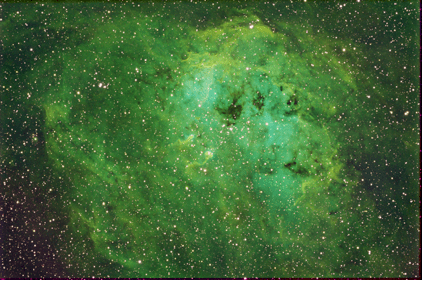

The main difference becomes visible when you do your first color combine. Since Ha is usually much "stronger" (the signal-to-noise ratio or 'SNR' is much better) than the OIII and SII, your Hubble palette combine (SII for Red, Ha for green, and OIII for blue) gives you a green-dominated result. So, you want to assign to the SII and OIII a stronger combine factor or do an equivalent in Photoshop to "push" the SII and OIII data before your combine. Even when that is done, you'll end up doing more color adjustments like a "Selective Color" adjustment layer in Photoshop before you'll be pleased with the results.

Here is an example of a simple combine in the Hubble palette:

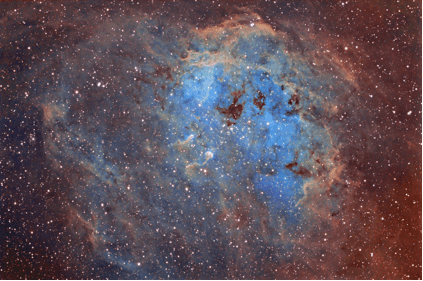

Here is the same data combined with extra weighting to SII and OIII, along with some additional color handling in Photoshop:

An associated problem in narrowband imaging is the magenta/red halos you have around all of your stars. This is because we push the OIII and SII much harder than the Ha, so the halos around the stars suffer from a lack of green, which of course equals magenta. Some folks don't worry about that. Others choose to neutralize the colored halos, like in this example:

|

|

8. Do all of your images use the Hubble palette or do you use other color palettes, as well?

I mostly do Hubble palette images. Sometimes, however, there is not much of either SII or OIII for that particular object, so you go in another direction. If you have only two channels, e.g., Ha and OIII, or Ha and SII, you can assign Ha to red, the other filter to blue, and synthesize a green channel for your image. (Noel Carboni's Photoshop actions are great for this!):

Sometimes, an object does really well as a false "true-color" image. For these, I use Ha for red, OIII for green, and OIII plus about 20% of Ha for the blue. (This emulates the H-beta emission that is in the blue part of the spectrum.) Additionally, I'll often overlay true RGB star colors as well:

9. Your website has an amazing image of NGC7822 that "includes data from both broadband and narrowband filters". Can you describe at a high level the general strategy and workflow that you followed to process this incredible image?

Here's the overall approach:

- I made sure to collect enough data to get a good SNR in each channel. This takes time!

- In addition to Ha, OIII, and SII data, I also captured some RGB data (for the stars).

- In CCDStack2, I generated the masters for each channel:

- Calibrated the subs (darks, flats, and bias)

- Registered or aligned them

- Normalized or balanced them with respect to one another

- Did data rejection so as to remove 'outliers' like cosmic ray hits, and satellite/airplane trails

- Did an average or mean combine.

- This was all done for the 6 channels; SII, Ha, OIII, Red, Green, and Blue

- Still in CCDStack, I used its tools to remove some of the remaining noise, then use deconvolution tools to improve each of the channels resolution and to equalize star sizes. Finally, I saved each narrowband channel as a 'scaled TIF' to import into Photoshop. (The RGB I did as a full-color image in CCDStack, with color balance advice from the excellent freeware program, eXcalibrator.)

- In Photoshop, I:

- Removed some of the background noise with NeatImage, utilizing the "inverse image layer mask" technique

- Altered the color away from a green-dominated image towards the more popular blue/gold motif with a Selective Color adjustment layer

- Star halo color removal:

- I selected the stars and then used a combination of a Selective Color adjustment layer and a Hue/Saturation adjustment layer to neutralize the magenta/red color. I then drew in the color from the nebula area surrounding the neutralized star halo, so as not to have large gray halos in the image

- I overlaid the RGB image, and then by using a suitable star mask, I allowed the real star colors to show through on top of the neutral white stars

- I used one of my favorite Noel Carboni actions, Local Contrast Enhancement, to make the mid-range tones of the image "pop"

- Finally, on the nebula areas only, I applied just a small amount of FocusMagic deconvolution

- Actually, since this was a two-frame mosaic, I repeated all of the above and put together the mosaic in Photoshop:

10. You recently started offering astrophotography tutoring services. What prompted that idea and how's it going?

I've always enjoyed teaching and helping others, so I thought I'd provide this service. I enjoy helping folks reduce their learning curve on either the image processing part of our hobby or the equipment setup part. Additionally, the minor incremental revenue helps to defray some of the costs associated with our hobby. At an ad hoc rate of $25.hour, or an average rate of about $20/hour for larger blocks of time, it's certainly not my day job, but the small amount of extra funds helps <g>. I generally have about 2-3 sessions per week, and it also let's me meet some interesting folks more directly.

11. What do you do when you're not producing beautiful astro images?

I work in the computer software field, heading up a group of project managers in a software firm. Hobby-wise I enjoy skiing, sailing, and biking.

Thank you very much for your time!

Pingback: Changes to Share Astronomy | Share Astronomy